When I told a friend about my new job as a quantum computing technologist for Digital Catapult he asked me if quantum computing was like nuclear fusion: a technology with great potential but with no benefits despite decades of costly research. Can quantum computing be useful in the near future, or are we going to have to wait decades for any benefits?

Not all problems can be solved by classical computers, despite their amazing progress

The electro-mechanical Colossus computer broke the enigma code in wartime Britain. Electro-mechanical relays were replaced by electric valves after the war, then by individual transistors in the fifties, and by integrated circuits in the sixties. The number of transistors on computer chips has doubled every two years, and modern chips contain over 50 billion transistors. These cheap, ubiquitous computer chips define the modern world.

Despite this massive increase in computing power there are still some tasks these “classical” computers can’t handle well. Finding a room temperature superconductor would help solve the world’s energy problems, yet research is blocked in part because classical computers can’t simulate quantum systems with many entangled particles. Medical research also suffers because classical computers can’t simulate large molecules and protein folding accurately. Optimisation and machine learning algorithms are sometimes limited by computing resource constraints. It is believed that some of these difficult problems will be solved in the future by quantum computers, which enable a fundamentally different computing paradigm.

Do quantum computers have an advantage over classical computers?

In the seventies the famous American physicist Richard Feynman hit a roadblock when he tried to simulate quantum systems on classical computers. Instead, he suggested in 1982 that these simulations could be carried out on a quantum computer.

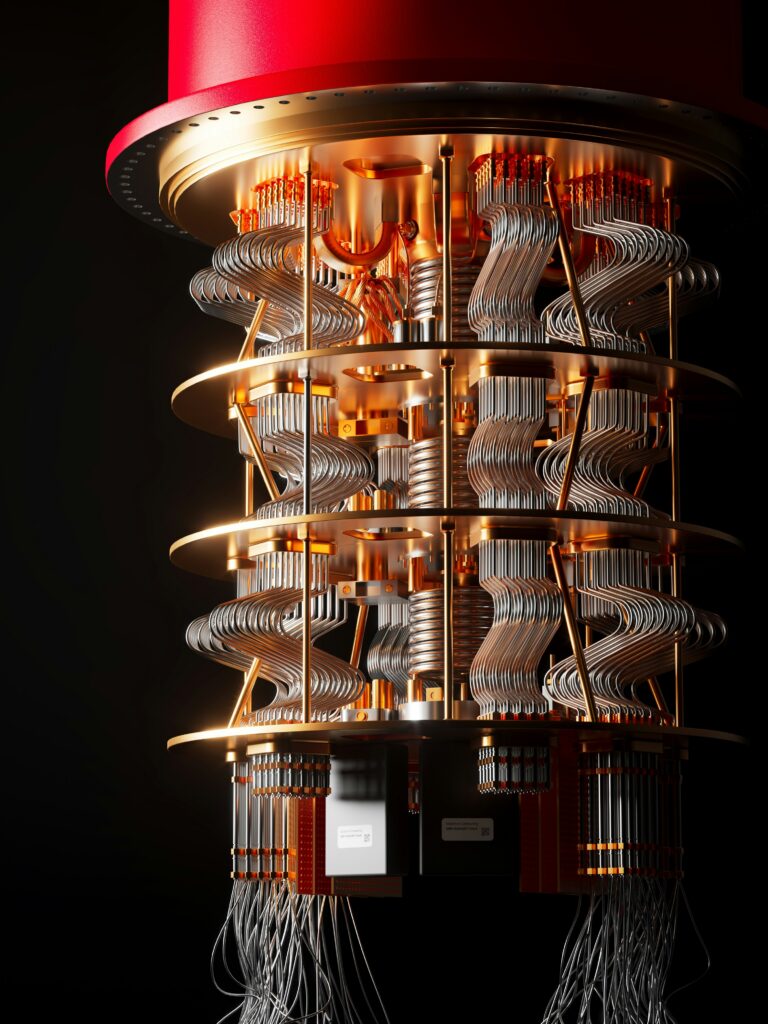

In quantum computers a qubit is a two level system where the levels might be the energy levels of the spin of an electron, or of a superconducting “model atom”. In a future blog we will explore qubit technologies further. Richard Feynman saw that a qubit is more complex, and needs more information to describe it, than a classical bit, which is only ever in a state “0” or “1”. Because of the strange rules of quantum mechanics, quantum “superposition” states are possible where the qubit can be in both the “0” and “1” state simultaneously. Parallel processing over many qubits in superposition can give quantum computers huge benefits over classical computers on some algorithms.

Shor’s 1994 quantum factorisation algorithm based on period finding using the quantum Fourier Transform and Grover’s 1996 quantum search algorithm shocked the research community by suggesting an impressive “quantum speed-up” on real life problems.

We are now in the NISQ era of quantum computing

In 2018 researchers at Google claimed that they had carried out a task on a 53 qubit programmable super-conducting computer that was impossible on a classical computer. Although the task had no business value and the error rate was very high, this demonstration hinted at the future possibilities of quantum computing.

At present we are in the era of “Noisy Intermediate Scale Quantum” (NISQ) devices, a term coined by the American physicist, John Preskill. In the NISQ era the devices available, even the impressive 127 qubit IBM Eagle computer, are too small to solve most problems. High error rates remain another challenge. Quantum states are very fragile, and can quickly decohere because of unwanted coupling between the qubits and the environment. Two qubit gate error rates are high, and errors quickly build up unless the gate depth is small. In the future quantum error correction such as the surface code may allow these errors to be corrected. Computations will be carried out on logical qubits, each of which comprises several physical qubits, so that errors in the physical qubits can be detected and corrected before they impact the logical qubit. Error correction may not be feasible on NISQ devices because of the overhead of the many qubits needed to detect and correct the errors.

The “holy grail” of modern research is a universal quantum computer, where the logical qubits are well protected against errors, and any algorithm can be run. To be able to run Shor’s algorithm this computer is likely to need thousands of logical and hence millions of physical qubits. There are considerable challenges to scaling the existing hardware and implementing the error correction needed. Such a universal quantum computer may not be built for decades.

Algorithms for our NISQ era

Meanwhile there is a massive global research effort to find quantum algorithms for business that can run on NISQ quantum hardware with relatively few qubits and short circuit depths. The most promising family of algorithms are the Variational Quantum Algorithms (VQA) . There has been much interesting, recent research on these algorithms, with many important papers published in the last six months which we will discuss in more detail in a future blog. These are hybrid algorithms, where a quantum computer is “trained” by a classical computer. A cost function is carefully chosen to represent the problem. The quantum computer computes this cost function using tunable parameters. The output is passed to a classical optimiser which calculates new parameters used for further iterations on the quantum computer. The feedback loop is repeated until a good solution with a low cost is found. Algorithms from this family have a wide range of applications including quantum optimisation and quantum machine learning, and exactly as predicted by Richard Feynman, quantum simulations of materials. In these applications an approximate solution is valuable: absolute accuracy is not required.

Quantum Annealing

Another promising line of research is quantum annealing. In most quantum computers a “circuit model” is used. During a computation sequential quantum operations are performed on the qubits. By contrast, in a quantum annealing machine, there are more qubits and these are linked together in a network. The problem to be solved is embedded on the annealing computer by varying the strength of the links and the computer is allowed to settle in a low energy state that hopefully represents the solution. Quantum annealing devices seem well suited to certain types of optimisation problems, for example, improving traffic flow.

Current status

There are several case studies where quantum computers have been used to solve parts of real-life business problems. Typically solving the entire problem is outside the capability of existing devices. For example, part of a financial portfolio can be optimised, but not the whole portfolio. Quantum device hardware will continue to improve and we believe that in a few years it will be quicker and cheaper to solve some problems on a classical computer than a quantum computer.

Conclusion

Most tasks, such as accounting applications and e-mail are handled well by classical computers and there is little point in using a quantum computer. Other problems, such as Shor’s factoring algorithm, are liable to remain out of the reach of quantum computers for decades. However, in the near term, there is real promise that quantum annealing machines and hybrid variational quantum algorithms run on NISQ machines will solve real business problems. Ultimately, it is extremely plausible that large universal quantum computers, well protected against noise, will be built, and these will change our world, making discoveries such as new drugs and room temperature superconductors.